Mechanistic interpretability

New techniques are giving researchers a glimpse at the inner workings of AI models.

Hundreds of millions of people now use chatbots every day. And yet the large language models that drive them are so complicated that nobody really understands what they are, how they work, or exactly what they can and can’t do—not even the people who build them. Weird, right?

It’s also a problem. Without a clear idea of what’s going on under the hood, it’s hard to get a grip on the technology’s limitations, figure out exactly why models hallucinate, or set guardrails to keep them in check.

But last year we got the best sense yet of how LLMs function, as researchers at top AI companies began developing new ways to probe these models’ inner workings and started to piece together parts of the puzzle.

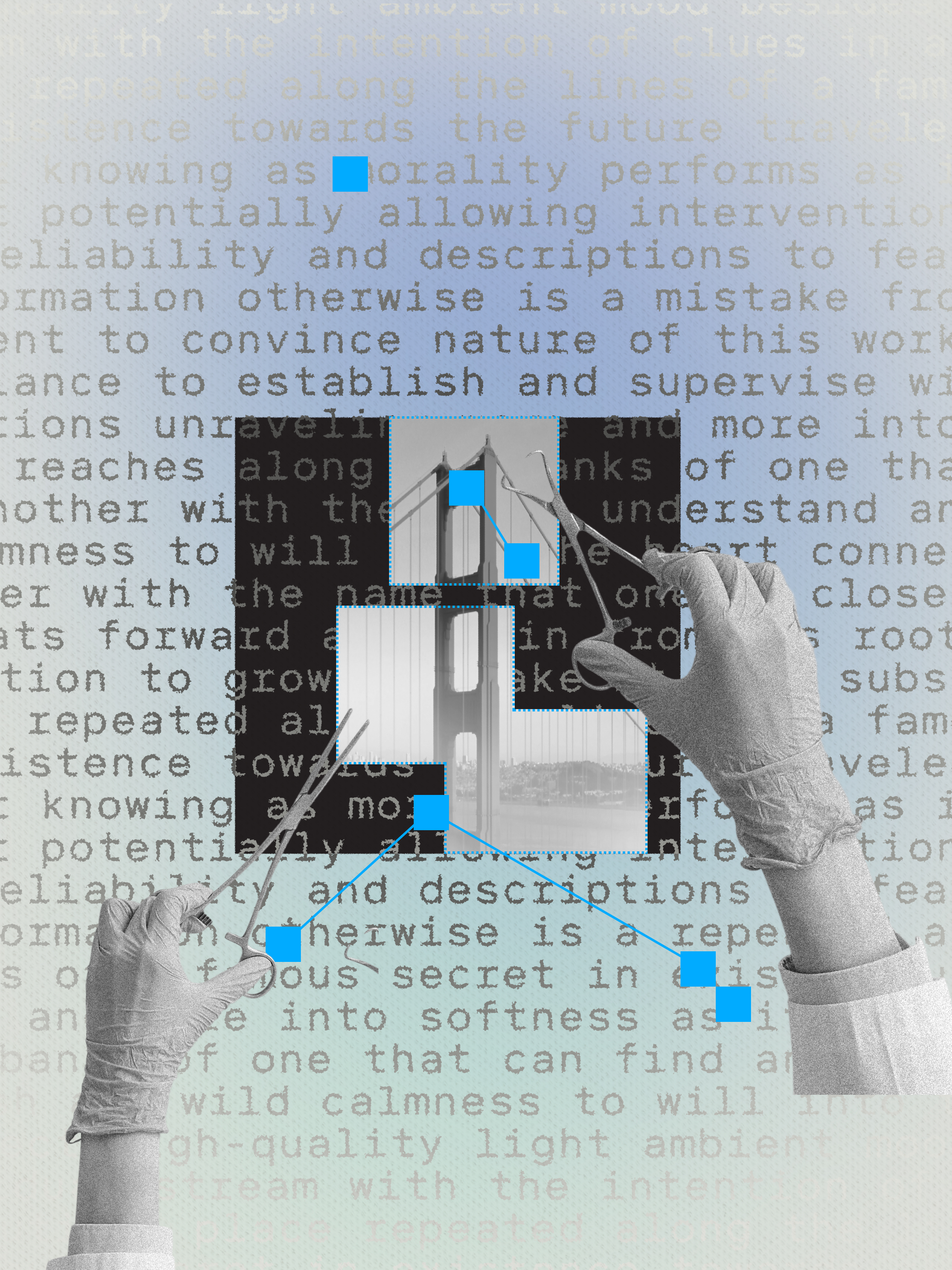

One approach, known as mechanistic interpretability, aims to map the key features and the pathways between them across an entire model. In 2024, the AI firm Anthropic announced that it had built a kind of microscope that let researchers peer inside its large language model Claude and identify features that corresponded to recognizable concepts, such as Michael Jordan and the Golden Gate Bridge.

In 2025 Anthropic took this research to another level, using its microscope to reveal whole sequences of features and tracing the path a model takes from prompt to response. Teams at OpenAI and Google DeepMind used similar techniques to try to explain unexpected behaviors, such as why their models sometimes appear to try to deceive people.

Another new approach, known as chain-of-thought monitoring, lets researchers listen in on the inner monologue that so-called reasoning models produce as they carry out tasks step by step. OpenAI used this technique to catch one of its reasoning models cheating on coding tests.

The field is split on how far you can go with these techniques. Some think LLMs are just too complicated for us to ever fully understand. But together, these novel tools could help plumb their depths and reveal more about what makes our strange new playthings work.

Deep Dive

Artificial intelligence

OpenAI is throwing everything into building a fully automated researcher

An exclusive conversation with OpenAI’s chief scientist, Jakub Pachocki, about his firm's new grand challenge and the future of AI.

How Pokémon Go is giving delivery robots an inch-perfect view of the world

Exclusive: Niantic's AI spinout is training a new world model using 30 billion images of urban landmarks crowdsourced from players.

This startup wants to change how mathematicians do math

Axiom Math is giving away a powerful new AI tool. But it remains to be seen if it speeds up research as much as the company hopes.

Want to understand the current state of AI? Check out these charts.

According to Stanford’s 2026 AI Index, AI is sprinting, and we’re struggling to keep up.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.